Select Sidearea

Populate the sidearea with useful widgets. It’s simple to add images, categories, latest post, social media icon links, tag clouds, and more.

hello@youremail.com

+1234567890

+1234567890

Populate the sidearea with useful widgets. It’s simple to add images, categories, latest post, social media icon links, tag clouds, and more.

Iztok Franko

How are airline personalization and experimentation connected? Is machine learning the answer?

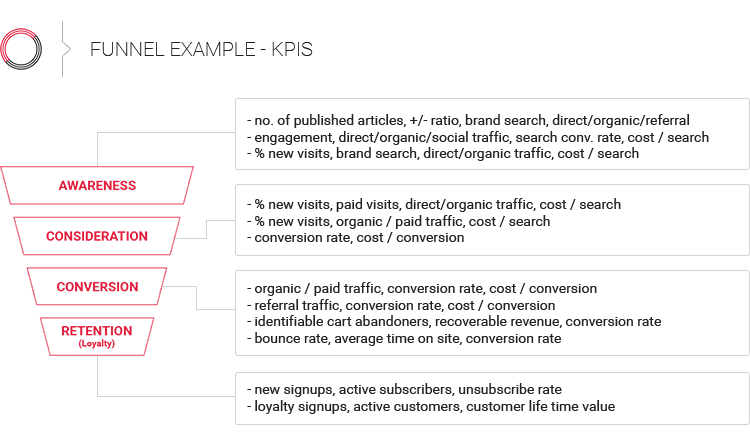

Do you have the right KPIs to measure the effectiveness of your optimization, personalization and machine learning activities?

I’ve seen so many articles and people talk about airline personalization. Almost all claim that it can’t be done without data science and machine learning.

On the other hand, there are not many resources that explain how you can know whether or not personalization and machine learning will actually work. You’ll find even fewer resources that talk about experimentation as the key element of your airline personalization program. Why are personalization and machine learning such popular topics among airlines, yet experimentation is rarely mentioned?

I believe if you really want to be great at personalization, and if you want to understand the value of your airline personalization activities, you need to combine both!

Why? I talked to a person who is an expert in both areas (personalization and experimentation) to provide you the answers.

Ronny Kohavi, a Vice President and Technical Fellow at Airbnb, has worked in the fields of data mining and machine learning for more than 25 years. He is an authority in the area of experimentation and co-author of the book Trustworthy Online Controlled Experiments: A Practical Guide to A/B Testing.

Before he joined Airbnb, Ronny grew the experimentation platform (ExP) team at Microsoft to over 110 data scientists, developers, and program managers to accelerate innovation at Microsoft through trustworthy analysis and experimentation.

But Ronny is not just an experimentation expert! In the past, he was Director of Data Mining and Personalization at Amazon, so he also has tons of experience in the area of personalization.

Here is what Ronny said about how he sees experimentation and personalization being connected:

The beauty of these two domains and why I’m attracted to both is that personalization or any predictive model that you have to try to help users – whether it’s personalized to the user or whether it’s contextualized to the population – you’ve got to use controlled experiments because they’re the best scientific method that we know today to evaluate whether what you’re building is useful. These two go hand-in-hand.

Basically, what Ronny is saying is that utilizing controlled experiments is the best way to see if your airline personalization initiatives work. And it doesn’t stop with personalization; it’s the same with your machine learning activities:

I’ll say even broader, it’s not just personalization; it’s any machine learning algorithm that you’ve built to do something. The best way to evaluate iterations of the model is through controlled experiments. We see that today. As machine learning and AI are being used more and more in the industry, obviously people are starting to use controlled experiments more heavily to evaluate those models and to be able to launch the challenger to the current champion.

It makes sense! If you want to be sure your personalization activities work, you need to measure and test. Because if you’re not testing, you’re basically guessing.

Experiments are the key to seeing which of your new digital product launches and new personalization algorithms work. What surprised me is the ratio of success Ronny experienced when running such experiments at Microsoft:

The overall statistics that we found at Microsoft over a large number of experiments is that about one-third of them are actually successful, meaning they improve that key metric in a statistically significant manner. One-third tend to be neutral; you’ve done something, it doesn’t move the key metric enough. And then there’s one-third, which I think is the most surprising part, one-third of the experiments actually hurt.

Now imagine: two-thirds of the things you are doing could have no effect or even a negative one.

What’s more, the above statistics Ronny talked about are from all experiments performed. When you talk about personalization, Ronny says you need to have even more realistic expectations. It will take a lot of knowledge and experience and many online experiments to make your airline personalization work:

I do think it’s also important to realize that personalization, while highly beneficial, is not going to result in some of the high expectations that people have out there that this will increase your revenues by 50%.

One, you have to take a realistic view that yeah, you’re going to get 10%, 15%, 20% improvement from doing personalization, but it takes a while to build it correctly. It takes a while to evolve it, come up with the right tradeoffs to make sure that you show it at the right time and you don’t annoy users and you’re able to properly handle some of the cold start problems that happen on new products.

With personalization especially, the more you optimize, the more difficult it is to find changes that will improve your results over time. Basically, the more you optimize, the harder it is to find new significant wins. This means you’ll need to run even more experiments to get incremental growth in the long run:

So this idea of approximately one-third, one-third, one-third was true for some of the products that we worked with. In areas that have been optimized for a while, like Bing, hundreds of experiments run concurrently all the time, this program has been running for several years, it’s actually harder to find a successful example. That one-third that I quoted in the beginning was actually decreasing over time, and we ended up with a success ratio much closer to about 10-20% of ideas that were actually beneficial, meaning improving the metrics that we had set.

By now, I hope you can see that the first and biggest mistake you can make when it comes to optimization and personalization is not testing.

But there is a second and almost as important thing that people fail to do right. Do you want to know what that is?

The second biggest mistake you can make when it comes to optimization and personalization is optimizing for the wrong metric. If you’ve ever worked for an airline, you know there are many departments that often work in silos. This often results in many departments working on optimizing their own metrics; however, when you look at the overall results, they’re not necessarily positive.

For example, your digital marketing departments might optimize ads and work on click-through rates (CTR), analyze cost-per-click (CPC) metrics, and measure acquisition costs (CPA). This often means promoting the lowest fares to achieve higher conversion and lower CPA. On the other hand, the revenue management department usually optimizes for the best possible price and highest yields per seat. Now add on the ancillary revenue department, which wants to boost ancillary revenue and ancillary attachment rates, and you can see how some of the metrics could be contradicting each other.

Personally, I’ve seen cases where airline email, ancillary, and loyalty teams were “fighting” about email frequency and how many ancillary product ads to include in their email campaigns (See a great example that Ronny shared on how they managed this challenge at Amazon in the next paragraph). Each department had their own goals and metrics and tried to optimize them.

Sounds familiar to you?

Vanity metrics often lead to optimization of metrics that make sense for a certain department but, in the end, not for the customers. These are, as Barclay Rae calls them, watermelon metrics: “[While] teams think they are doing a great job hitting green targets, their customers view it quite differently and only see red.”

Ronny put it similarly in our interview:

[These metrics] tend to look green, but really when you dig inside, there’s a lot of redness.

To illustrate this challenge, Ronny shared an interesting example from the Amazon email personalization program. When they looked at just revenue, most experiments were positive:

When I joined Amazon, we did run controlled experiments on email, and that was done even before my time, but they were generally positive. Anybody that came up with an idea of, “Hey, let’s email users who bought from a single author of some book, let’s mail them if that author comes up with a second book.” Seems reasonable. Started an email program, runs great. We make revenue, even relative to the control group. Then you introduce another program and you say, “Let’s find similarities,” use an association algorithm or even just one-to-one recommendations, and then you start another email campaign. As you add more and more campaigns, they all seem like they’re positives, but you start to hear from users that we’re spamming them too much. That’s where you have to say there is a tradeoff here. Every user that unsubscribes from our emails, we lose the ability to market to them through this email channel. We lose the lifetime value of that channel, and that could be pretty important.

Once they added better criteria to evaluate the effectiveness of their campaigns, the experiment results were seen in a different light:

We said, what is the lifetime value of the email channel for a user that unsubscribes? Then for every campaign, we evaluated the percentage of people that unsubscribed multiplied by that lifetime value that we just lost and compared that to the lift from the emails. Shocking as it was to many, many people, most of our email campaigns were actually negative under that metric. It was an amazing insight and a way to control, to find that countervailing metric that helps you understand that you can’t just have a metric that’s monotonically increasing with an action because you’ll just do that more and more. There has to be some sort of a balancing metric that tells you that you’re not doing as well.

Long term, this learning and a better metric enabled them to come out with significantly better and high quality campaigns, with a lower cost of unsubscribing.

If you read our interview with another book author and experimentation leader, Stefan Thomke, you saw how experimentation really enables innovation. In Ronny’s view, it starts by enabling your user experience:

I think experimentation is fundamental to using data to help improve experiences for the business and for the users.

However, experimentation goes beyond that. Here is a quote from Ronny’s book:

Running trustworthy controlled experiments is the scientific gold standard in evaluating many (but not all) ideas and making data-informed decisions. What may be less clear is that making controlled experiments easy to run also accelerates innovation by decreasing the cost of trying new ideas and learning from them in a virtuous feedback loop.

Listen to the full Diggintravel Podcast interview with Ronny to learn more about:

I am passionate about digital marketing and ecommerce, with more than 10 years of experience as a CMO and CIO in travel and multinational companies. I work as a strategic digital marketing and ecommerce consultant for global online travel brands. Constant learning is my main motivation, and this is why I launched Diggintravel.com, a content platform for travel digital marketers to obtain and share knowledge. If you want to learn or work with me check our Academy (learning with me) and Services (working with me) pages in the main menu of our website.

Download PDF with insights from 55 airline surveyed airlines.

Thanks! You will receive email with the PDF link shortly. If you are a Gmail user please check Promotions tab if email is not delivered to your Primary.

Seems like something went wrong. Please, try again or contact us.

No Comments